Summary:

- March 3rd, 2026: AI-Native Networks: 12+ Operators Lock In 6G at MWC 2026

- March 16th, 2026: NVIDIA NemoClaw: 1-Command AI Agent Stack for OpenClaw

- March 18th, 2026: Google AI Studio Goes Full-Stack: 7 New Vibe Coding Features

- March 18th, 2026: Google Stitch Reborn: AI-Native UI Canvas Kills the Wireframe

- March 19th, 2026: MAI-Image-2 Debuts at #3: Microsoft Enters Top Text-to-Image Labs

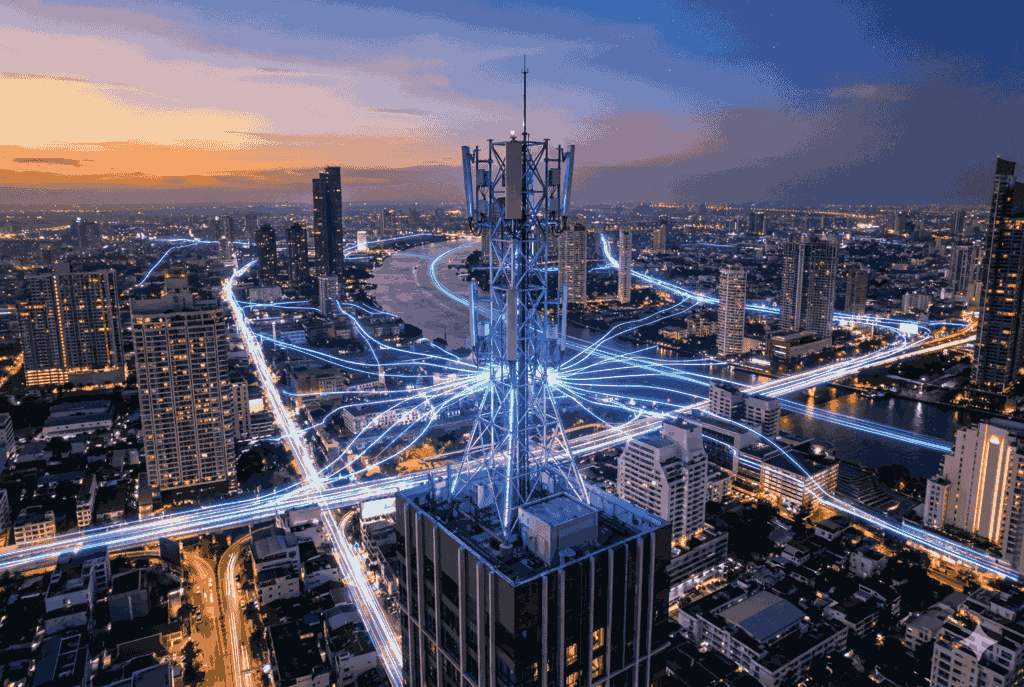

AI-Native Networks: 12+ Operators Lock In 6G at MWC 2026

Date: March 3rd, 2026

Meta Description: Nvidia secures 12+ operator commitments, Nokia shares jump 5.4%, and a 130-company coalition targets AI-native 6G. What MWC 2026 actually proved and what's next.

MWC 2026 in Barcelona did not just reiterate the AI-RAN vision. It delivered. A wave of announcements from the world's largest telecom vendors, chipmakers, and operators produced live field trial results, commercial product launches, open-source toolkits, and a multi-operator coalition formally committing to build 6G on AI-native foundations.

For enterprise and IT decision-makers, the signal is clear: the architectural shift in telecom infrastructure will soon reshape how connectivity is delivered, managed, and monetised.

AI-Native 6G Coalition Unites 130+ Companies at MWC 2026

Nvidia secured commitments from more than 12 global operators and technology companies including BT Group, Deutsche Telekom, Ericsson, Nokia, SK Telecom, SoftBank, T-Mobile, Cisco, and Booz Allen to build 6G on open, secure, and AI-native software-defined platforms. The initiative is backed by ongoing government collaborations across the US, UK, Europe, Japan, and Korea.

Nvidia founder and CEO Jensen Huang stated that AI is redefining computing and driving the largest infrastructure buildout in human history, with telecommunications as the next frontier. The company is a founding member of the AI-RAN Alliance, which now counts over 130 participating companies, and has joined the FutureG Office-led OCUDU Initiative in the US to accelerate open, software-defined, AI-native 6G architectures.

Nvidia's Open-Source Toolkit Targets Live Network Operations

Nvidia also released a suite of open-source tools for network operators: a 30-billion-parameter Nemotron Large Telco Model (LTM), developed with AdaptKey AI and fine-tuned on telecom datasets; a co-published open-source guide with Tech Mahindra for building AI agents that reason like NOC engineers; and new Nvidia Blueprints targeting RAN energy efficiency and network configuration.

Nokia completed functional tests of its anyRAN software on Nvidia's GPU-accelerated AI-RAN platform with T-Mobile US, Indosat Ooredoo Hutchison (IOH), and SoftBank Corp, moving validation out of lab environments and into live, over-the-air conditions. Nokia shares rose 5.4% on the day of the announcement. Ericsson, taking a different path, unveiled 10 new AI-ready radios built on its own purpose-built silicon featuring neural network accelerators, delivering up to 7x faster response times no Nvidia GPUs required. The company also announced a broad collaboration with Intel to accelerate AI-native 6G ecosystem readiness.

Why AI-RAN Is Accelerating and What Operators Are Building Next

The shift from concept to commercial infrastructure is visible in both the operator strategies and the hardware ecosystem forming around AI-RAN.

SK Telecom outlined a full-stack AI-native rebuild, from its network core to customer service systems. SoftBank demonstrated its Autonomous Agentic AI-RAN system, enabling networks to manage themselves based on natural-language operator intent rather than manual instruction. On the hardware side, Quanta Cloud Technology, Supermicro, MSI, and Lanner Electronics all announced purpose-built AI-RAN products at MWC 2026.

Key commitments and data points shaping the roadmap:

- SK Telecom plans to upgrade its sovereign AI foundation model from 519 billion to over 1 trillion parameters, with a new AI data centre in Korea built in collaboration with OpenAI

- SoftBank's AITRAS Orchestrator can identify spare RAN compute capacity to run third-party AI workloads, pointing to a new operator monetisation path beyond connectivity

- IOH completed Southeast Asia's first AI-RAN-powered Layer 3 5G call at MWC, with AI and RAN workloads running simultaneously on shared GPU infrastructure

- 77% of respondents in Nvidia's State of AI in Telecom report anticipate a significantly faster deployment timeline for AI-native wireless architecture than for prior network generations

- AMD positioned its EPYC 8005 edge platform and Open Telco AI initiative as an alternative compute path for operators moving from AI pilots to production

- Cassava Technologies is deploying Nvidia's network configuration blueprint for an autonomous network platform across Africa's multi-vendor mobile environment

The architecture debate between Ericsson's custom silicon path and Nokia-Nvidia's $1 billion GPU-accelerated approach remains open and will shape operator procurement decisions for years. What MWC 2026 made clear is that AI-native networks are no longer a research agenda. The field trials are live, the hardware is shipping, and the coalitions are in place.

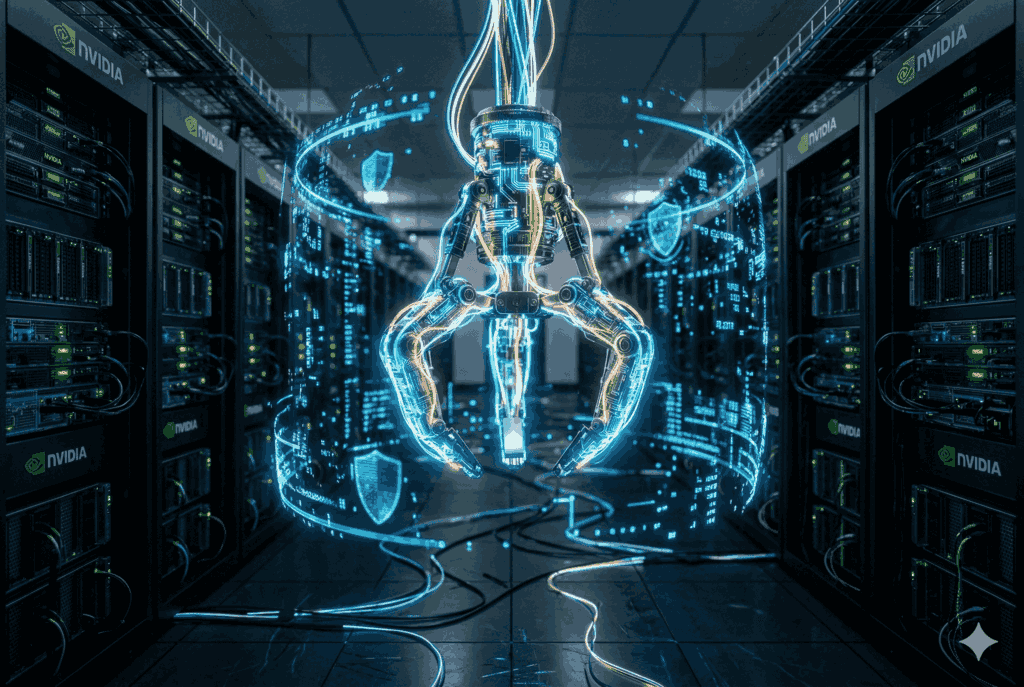

NVIDIA NemoClaw: 1-Command AI Agent Stack for OpenClaw

Date: March 16th, 2026

Meta Description: NVIDIA's NemoClaw brings Nemotron models + OpenShell to OpenClaw in a single command, adding privacy and security for always-on AI agents across RTX PCs to DGX Spark.

At GTC on March 16, 2026, NVIDIA announced NemoClaw, a full-stack software solution for the OpenClaw agent platform that installs NVIDIA Nemotron models and the newly released NVIDIA OpenShell runtime in a single command. The release adds privacy controls, security guardrails, and dedicated compute infrastructure to autonomous AI agents called claws making them more trustworthy and scalable for both individual users and enterprise deployments.

OpenClaw, described by NVIDIA CEO Jensen Huang as the fastest-growing open source project in history, now has a structured infrastructure layer beneath it. NemoClaw is that layer providing the access controls, privacy routing, and compute foundation that always-on agents require to operate continuously and securely.

NemoClaw Delivers Single-Command Setup With OpenShell Sandbox and Privacy Router

NemoClaw is powered by NVIDIA Agent Toolkit software and installs OpenShell to provide an isolated sandbox environment that enforces data privacy and security across autonomous agent operations. The system is designed to give agents the permissions they need to be productive while keeping network access, policy enforcement, and privacy boundaries under defined control.

The stack is model-agnostic and supports any coding agent. For open model workflows, it runs NVIDIA Nemotron locally on dedicated hardware. For tasks requiring frontier model capabilities, a built-in privacy router channels requests to cloud-based models without exposing local data. This local-cloud combination forms the foundation for agents to develop new skills and complete tasks within policy-defined boundaries.

Runs Across RTX PCs, DGX Station, and DGX Spark

NemoClaw is hardware-flexible and designed to run around the clock on dedicated platforms. Supported systems span consumer and enterprise tiers: NVIDIA GeForce RTX PCs and laptops, NVIDIA RTX PRO-powered workstations, NVIDIA DGX Station, and NVIDIA DGX Spark AI supercomputers all capable of providing the persistent local compute that always-on autonomous agents require.

Why NemoClaw Matters: OpenClaw's Missing Infrastructure Layer

The release addresses the structural gap that has limited OpenClaw's deployment at scale: the absence of a trusted, policy-enforcing runtime that can keep agents active, secure, and productively connected without requiring constant manual oversight.

- OpenClaw is described by NVIDIA as the fastest-growing open source project in history and positioned as the operating system for personal AI

- NemoClaw installs the entire stack Nemotron models plus OpenShell runtime in a single command, removing setup friction for developers and non-technical users alike

- OpenShell provides an isolated sandbox separating agent activity from the host system, enforcing network and privacy guardrails at the infrastructure level

- A privacy router enables agents to use frontier cloud models without exposing sensitive local data, combining cloud reach with local control

- Supported hardware ranges from consumer GeForce RTX PCs to enterprise DGX Spark AI supercomputers, making the stack accessible across deployment scales

- GTC attendees can build and deploy a live AI assistant using NemoClaw at the build-a-claw event running March 16-19 in the GTC Park

OpenClaw creator Peter Steinberger framed the collaboration as constructing the claws and guardrails that allow anyone to build powerful, secure AI assistants a signal that the open source ecosystem and NVIDIA's enterprise stack are now converging around a shared agent infrastructure rather than diverging. The question going forward is whether NemoClaw's security and privacy architecture will become the default trust layer for the broader autonomous agent ecosystem, or whether competing stacks will fragment what is currently a single fast-growing platform.

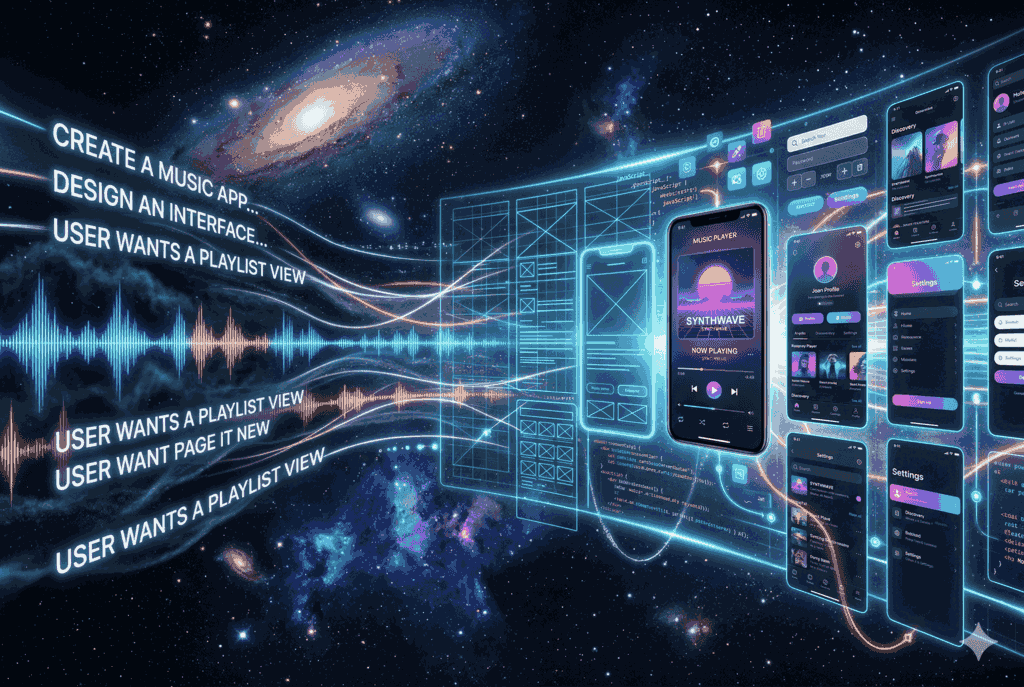

Google AI Studio Goes Full-Stack: 7 New Vibe Coding Features

Date: March 18, 2026

Meta Description: Google AI Studio's Antigravity agent now builds multiplayer apps, adds Firebase auth, Secrets Manager, and Next.js support. Turn prompts into production apps today.

Google AI Studio launched a completely upgraded vibe coding experience on March 18, 2026, powered by the new Google Antigravity coding agent. The update moves the platform beyond prototype generation into full-stack, production-ready application development without users ever leaving the vibe coding environment.

The release pairs the Antigravity agent with a built-in Firebase integration, bringing secure user authentication via Firebase Authentication, real-time database provisioning through Cloud Firestore, and a new Secrets Manager for safely storing API credentials. The new experience has already been used internally at Google to build hundreds of thousands of apps over the past several months.

Antigravity Agent Builds Multiplayer, Auth, and Next.js Apps From Prompts

The upgraded Google AI Studio environment introduces a fundamentally more capable agent that maintains a deeper understanding of a project's full structure and chat history across sessions. The result is faster iteration and more precise multi-step code edits driven by simpler natural-language prompts.

The Antigravity agent now proactively detects when an app requires a database or authentication layer and provisions both automatically upon user approval provisioning Cloud Firestore for persistent data storage and Firebase Authentication for secure Google sign-in. It also pulls from the full ecosystem of modern web libraries autonomously, installing tools like Framer Motion for animations or Shadcn for UI components without requiring the user to specify them explicitly.

Firebase Integration and Secrets Manager Close the Production Gap

The addition of a Secrets Manager in the Settings tab allows users to connect apps to live external services including payment processors, custom databases, and Google Maps by securely storing API credentials that the agent detects and calls when needed. Sessions are now persistent across devices: closing a browser tab no longer resets progress, enabling users to return to active projects at any point.

What's Live Now and What's Coming Next in AI Studio

The full set of production capabilities shipping with this release reflects a push to close the gap between AI-generated prototypes and deployable software.

- Multiplayer support is now live: real-time games, collaborative workspaces, and shared tools that connect users instantly are all buildable from a single prompt

- Firebase Authentication and Cloud Firestore are provisioned automatically when the agent detects the need, requiring only user approval to activate

- Next.js joins React and Angular as supported frameworks, selectable via the updated Settings panel

- A Secrets Manager in Settings stores API credentials securely, enabling integrations with external services including Google Maps, payment processors, and custom databases

- Session persistence allows users to close and reopen projects without losing state, across any device

- The agent now maintains full project structure and chat history context, enabling complex apps to be built from progressively simpler prompts

- Coming soon: Google Workspace integration to connect Drive and Sheets directly to apps, plus a single-button path to deploy from Google AI Studio to Google Antigravity

The roadmap items particularly the Workspace integration and one-click Antigravity deployment signal that Google is positioning AI Studio as a continuous pipeline from idea to live production app, rather than a standalone prototyping tool. Whether the platform can maintain that trajectory as app complexity scales beyond demo use cases will determine how seriously it competes with dedicated full-stack development environments.

Google Stitch Reborn: AI-Native UI Canvas Kills the Wireframe

Date: March 18th, 2026

Meta Description: Google Labs evolves Stitch into a full AI-native design canvas with voice control, DESIGN.md, and MCP export. Turn natural language into high-fidelity UI in minutes.

Google Labs unveiled a major evolution of Stitch on March 18, 2026, transforming the tool from a design aid into a fully AI-native software design canvas. The platform now allows anyone from professional designers to first-time founders to generate, iterate, and collaborate on high-fidelity UI directly from natural language descriptions, bypassing the traditional wireframe-first workflow entirely.

The concept powering the update is what Google is calling "vibe design": a mode of working where users begin not with a layout, but with a business objective, an emotional intent, or an inspirational reference and let the AI build outward from there.

Google Stitch Gets AI-Native Canvas, Voice Agent, and DESIGN.md

The redesigned Stitch platform introduces four interconnected capabilities that collectively replace the conventional design process. The foundation is a new infinite canvas built for AI-native workflows, giving projects room to evolve from early ideation through working prototypes in a single environment. Users can bring ideas in any form images, text, or code directly onto the canvas as context.

Paired with the canvas is a new design agent that reasons across a project's full history and an Agent Manager that allows multiple design directions to run in parallel while keeping work organized. A separate feature, DESIGN.md, introduces an agent-friendly markdown file format for exporting and importing design system rules across projects and tools. Design systems can also be extracted from any live URL, removing the need to rebuild foundational styles from scratch across projects.

Voice Control and Real-Time Critique Now Built In

Stitch now supports direct voice interaction with the canvas. The agent can conduct real-time design critiques, interview the user to construct a new landing page, and execute live updates such as generating multiple menu variations or rendering a screen in different color palettes from spoken instruction. Static designs can also be converted into interactive prototypes instantly, with the system auto-generating logical next screens based on user click paths and a single "Play" button to preview full app flows.

Stitch Roadmap: MCP, SDK, and the Path From Design to Code

The update positions Stitch as a connective layer across the broader development workflow, not just a design tool operating in isolation.

- A Stitch MCP server and SDK are already released, enabling integration of Stitch capabilities into external tools via skills and APIs

- The Stitch Skills repository on GitHub has accumulated 2,400 stars, signaling active developer adoption ahead of the canvas launch

- Designs can be exported directly to developer tools including AI Studio and Antigravity, keeping design and engineering teams in sync without manual handoff

- DESIGN.md files can be imported into other Stitch projects, meaning established design systems transfer across work without being rebuilt

- Voice capabilities allow iterative design critique and real-time canvas updates, keeping creators in a continuous creative flow without switching tools

- The platform targets both professional designers exploring large numbers of variations and non-technical founders building their first product interface

The evolution of Stitch reflects Google Labs' broader push to collapse the gap between idea and functional software. What previously required days of back-and-forth between design and development teams is now framed as a minutes-long process on a single AI-native canvas. Whether the "vibe design" framing translates into sustained workflow adoption particularly among teams with established design systems will be the practical test this release now faces.

MAI-Image-2 Debuts at #3: Microsoft Enters Top Text-to-Image Labs

Date: March 19th, 2026

Meta Description: Microsoft's MAI-Image-2 lands #3 on Arena.ai's leaderboard, beats top image labs, and is now live on MAI Playground. See what changed and who gets API access first.

Microsoft AI launched MAI-Image-2 on March 19, 2026, its second-generation text-to-image model, placing the company among the top 3 text-to-image labs in the world according to the Arena.ai leaderboard. The release marks a significant step forward from its predecessor, MAI-Image-1, which debuted in the top 10 on the same leaderboard.

The model is immediately available via the MAI Playground, where users can experiment and submit feedback directly to the development team. API access has been opened to select Microsoft enterprise customers with WPP named as an early commercial partner and will expand to all developers through Microsoft Foundry in the near future.

MAI-Image-2 Hits #3 on Arena.ai With 3 Core Creative Upgrades

Built in direct collaboration with photographers, designers, and visual storytellers, MAI-Image-2 targets the practical gaps that creatives encounter most in daily production work. The model advances across three specific capabilities that previous iterations struggled to deliver consistently.

The first is enhanced photorealism: natural lighting, accurate skin tones, and lived-in environments that reduce the need for post-production correction. The second is reliable in-image text generation, enabling consistent output for infographics, posters, slides, and diagrams with high fidelity between prompt and result. The third is rich scene generation, covering surreal compositions, ornate detail, and cinematic concepts at a level of ambition that earlier models frequently failed to execute.

GB200 Cluster Now Operational at Microsoft AI Lab

The Microsoft AI Superintelligence (MSI) team confirmed that its next-generation GB200 compute cluster is now fully operational, underpinning the infrastructure behind MAI-Image-2 and future model generations. The team described its setup as a lean, fast-moving lab working on an ambitious roadmap with direct reach to billions of users through Microsoft product integrations.

MAI-Image-2 Rollout: Copilot, Bing, Foundry Access on the Way

The commercial and developer rollout for MAI-Image-2 is structured in phases, with several access paths now confirmed or actively opening.

- MAI Playground access is live today for all users at playground.microsoft.ai

- Copilot and Bing Image Creator integrations are beginning to roll out

- API access is currently available for select enterprise customers, with WPP confirmed as an early commercial partner for image generation at scale

- Full developer access will open via Microsoft Foundry soon; a commercial interest application form is available now

- The GB200 cluster is operational, positioning MAI for its next generation of model development

- MAI-Image-1, launched in late 2025, debuted in the Arena.ai top 10 MAI-Image-2 advances that ranking to #3 within a single model cycle

The progression from top-10 debut to a #3 global ranking in one generation signals that Microsoft's in-house image model program is compressing development timelines at a pace that is beginning to pressure the leading standalone text-to-image labs. The next release from the MSI team has not been dated, but the team confirmed that further model announcements are already in progress.